Unveiling WormGPT:

A malicious chatbot created by a skilled hacker as a dedicated assistant for cybercriminals. According to SlashNext, an email security provider that tested the chatbot, the developer of WormGPT is offering access to the program for sale in a well-known hacking forum.

By infosecbulletin

/ Saturday , July 27 2024

India’s Communications Minister Chandra Sekhar Pemmasani confirmed a breach at the state-owned telecom operator BSNL on May 20 during a...

Read More

By infosecbulletin

/ Saturday , July 27 2024

Malware based threats increased by 30% in the first half of 2024 compared to the same period in 2023, according...

Read More

By infosecbulletin

/ Friday , July 26 2024

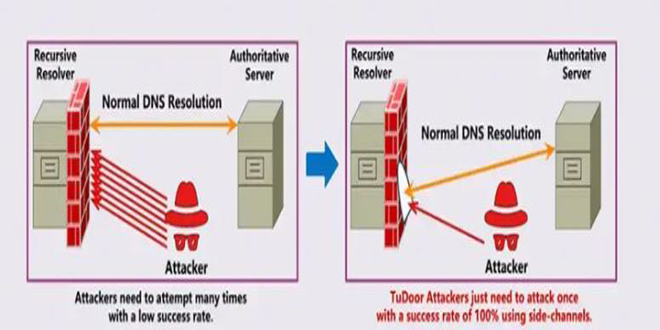

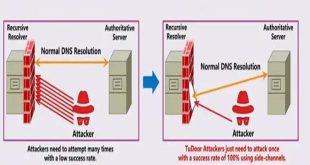

A new critical vulnerability in the Domain Name System (DNS) has been found. This vulnerability allows a specialized attack called...

Read More

By infosecbulletin

/ Friday , July 26 2024

A serious vulnerability, CVE-2023-45249 (CVSS 9.8), has been found in Acronis Cyber Infrastructure (ACI), a widely used software-defined infrastructure solution...

Read More

By infosecbulletin

/ Friday , July 26 2024

OpenAI is testing a new search engine "SearchGPT" using generative artificial intelligence to challenge Google's dominance in the online search...

Read More

By infosecbulletin

/ Thursday , July 25 2024

CISA released two advisories about security issues for Industrial Control Systems (ICS) on July 25, 2024. These advisories offer important...

Read More

By infosecbulletin

/ Thursday , July 25 2024

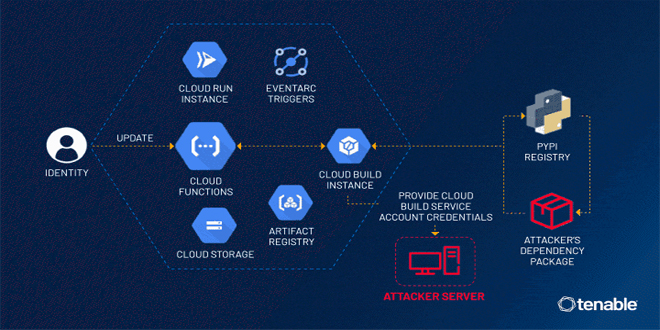

Tenable security researchers found a vulnerability in Google Cloud Platform's Cloud Functions service that could allow an attacker to access...

Read More

By infosecbulletin

/ Thursday , July 25 2024

BDG e-GOV CIRT's Cyber Threat Intelligence Unit has noticed a concerning increase in cyber-attacks against web applications and database servers...

Read More

By infosecbulletin

/ Thursday , July 25 2024

GitLab released a security update today to fix six vulnerabilities in its software. Although none of the flaws are critical,...

Read More

By infosecbulletin

/ Thursday , July 25 2024

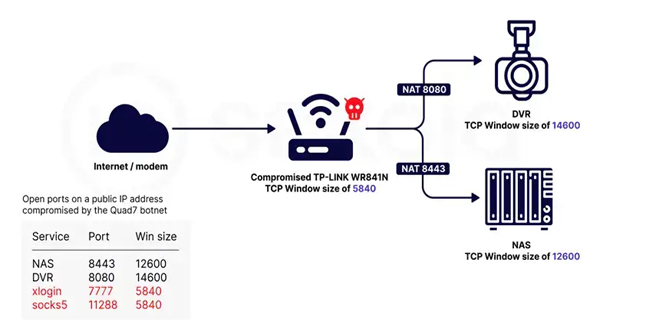

Sekoia.io and Intrinsec analyzed the Quad7 (7777) botnet, which uses TCP port 7777 on infected routers to carry out brute-force...

Read More

“Malicious actors are creating their own custom modules similar to ChatGPT, but easier to use for bad intentions,” the company stated in a blog post.

ALSO READ:

20% of malware attacks bypass antivirus protection

The hacker seems to have first introduced the chatbot in March and then officially launched it last month. Unlike ChatGPT or Google’s Bard, WormGPT lacks any safeguards to prevent it from responding to harmful requests.

The developer of this project wants to create a ChatGPT alternative that allows users to engage in illegal activities and easily sell them online later on. WormGPT empowers individuals to engage in a plethora of black hat activities, enabling them to partake in malicious endeavors right from the comfort of their own home.

The developer of WormGPT has also shared screenshots that demonstrate how you can request the bot to create Python malware and offer suggestions for devising harmful attacks.

For the creation of the chatbot, the developer utilized GPT-J, a powerful and open-source large language model developed in 2021. The model was then trained on data concerning malware creation, resulting in WormGPT.

SlashNext tested the capabilities of WormGPT by assessing its ability to craft a compelling email for a business email compromise (BEC) scheme, a deceptive phishing attack.

“The results were unsettling. WormGPT produced an email that was not only remarkably persuasive but also strategically cunning, showcasing its potential for sophisticated phishing and BEC attacks,” SlashNext said.

InfoSecBulletin Cybersecurity for mankind

InfoSecBulletin Cybersecurity for mankind